A few weeks ago, I finished a bug hunt for the RapidFTR open source project, which took me three evenings. I thought it might be worth sharing the story of the hunt.

This article will describe what I did. I will give an overview of my journey to actually find the root cause of what was going on. My goal with this article is to highlight examples of techniques you can use, to actually track down strange and random bug.

In the end, I found a pretty clear explanation what and why the problem happened. And it is not a coincidence, that there is a ‘random’ in the title of this article.

Start - What was the problem

The problem was, that when following the guide for using a development virtual machine, sometimes starting up the application took a long time and then failed. I had the issue right away, after starting the application twice. Other people had the same problem.

I was told, nobody really knew, why it was behaving this way - but restarting the VM would probably fix it. It didn’t for me.

But I enjoy digging into problems - so I wanted to know, what was going wrong.

Rake File to Solr

The error occurred when running rake app:run, sometimes. From running rake with –trace –verbose, the debug option, I was able to see, that the application was waiting for sunspot to start the Apache Solr search service.

So the question was, where does the process take all the time? The good thing to start with was, that rake and sunspot are written in Ruby. So I read the code and added a few output statements to see, which statement took so long. It turned out, it was the Java process starting Solr.

Digging into the Java

Apache Solr sends some output to sdtout - but sunspot hides it from you. But as any output is very helpful when debugging, I wanted to see the output.

I used ps -aux to find the exact command-line arguments sunspot was issuing and started the process manually from the command-line. Luckily, it still took some time - so I knew the problem was not in the Ruby Code.

With the port mapper nmap I found out, that it took between 2 seconds to over 2 minutes to start the service. But the rake task only waited for 10 seconds.

Now having the the console output, I could see, that Solr internally uses Jetty - and it was Jetty in version 6.1.3, which took all the time - in the case, it took the time. So I downloaded the tag for version 6.1.3 from source control and looked into the code.

I found a system property called DEBUG which increases the output. I enabled it and saw that the last debug output, before it took so long, was something about starting the session service (I’ve lost pointer to the code - unfortunately).

This didn’t really help me. I unsuccessfully tried to make the Jetty log work with log4j to eventually see more - but I failed and had to give up for the evening (the 2nd one).

Debug the Java Code

So if your not getting anything out of the log, you can still use a debugger. The code was however failing on the VM - an Ubuntu box, but not on my local machine. But fortunately Java has a remote debugger.

I started the Java process with the remote debugging arguments and attached a listener in IntelliJ with the Jetty code from SVN. I tried to get the execution stopped at a breakpoint near the last log output - but this method was called very often and I didn’t get it to hit the breakpoint at the right moment.

However, when something takes very long, it can be helpful just to stop the running process and look at the stack trace. So I stopped the execution and searched for the last method frame from Jetty, which was calling into the JDK - and there I found the following comment:

1 2 3 4 5 | |

The Solution

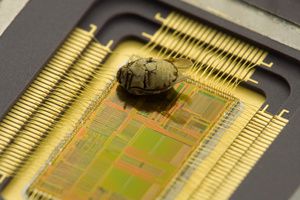

Finally - I did go to the Jetty wiki page referenced. I found out, that Java’s java.util.SecureRandom uses real entropy from the operating system - which can block when there is none available.

The solution from the wiki page, just to use the normal java.util.Random, would have been difficult, because the configuration file lives deep inside sunspot and Solr. But the line ‘NB Some workaround reports use /dev/./urandom instead of /dev/urandom’ gave me a hint, were to look otherwise.

I googled for the solution and found on Stack Overflow, that you can replace /dev/urandom random with /dev/./urandom in your Java process. This can be done by using the command-line argument -Djava.security.egd=file:/dev/./urandom or by replacing it in the file /usr/lib/jvm/java-6-openjdk/jre/lib/security/java.security (on an Ubuntu box).

So what was the matter? Java’s secure random will not accept /dev/urandom as source for random numbers. /dev/urandom is unblocking, which means it will return random numbers which can be guessed - when there is no real entropy available. So Java defaults to /dev/random which will block and wait, until entropy is present.

This is the reason, why blocking is very unlikely to occur on a production or development machine - however it did happen, when using a virtual machine, which does not have a lot of network traffic and IO. It happens especially often, when you restart an application often - as you tend to do, when something is not working.

The fix is a little funny. Java rejects /dev/urandom based on the string. It does not reject /dev/./urandom which of course points to the same potentially unsafe random number generator.

Last Words

The fix introduces a potential security risk - but on a development virtual machine this is not a problem.

As you can see, finding a bug or problem in a system involves to use techniques, which bring you nearer to the root cause. However, there is no golden path. Sometimes one technique will not get you any more information. So you will have to try a different technique. Sometimes your gut feeling might help you as well.

Having arrived here, what are your thoughts on the story?